Introduction

Machine learning promises to revolutionize image recognition, but sometimes it learns the wrong lesson. One of the most memorable failures involved a model trained to identify trout fish — only to misclassify them wildly in real-world scenarios. Why? Because the training data mostly featured images of people holding trout, not trout themselves.

This isn’t an isolated case. It’s part of a broader pattern where machine learning models latch onto irrelevant features, leading to spectacular errors. Let’s dive into the trout fiasco and explore similar examples that reveal the hidden pitfalls of AI training.

🎣 The Trout Identification Failure

What Happened

Researchers set out to build a model that could identify trout in images. They trained it on a dataset containing photos labelled “trout.” But most of those images showed people holding trout, often outdoors, with hands, fishing gear, and backgrounds dominating the frame.

The Result

The model didn’t learn what a trout looks like. It learned:

- What a person holding a fish looks like

- What fishing scenes typically contain

- What outdoor lighting and hand positions suggest

When tested on images of trout without people, the model failed — often misclassifying the fish entirely.

Why It Failed

This is a classic case of dataset bias:

- The model learned correlated features, not causal ones

- It couldn’t generalize beyond the context it was trained on

- It mistook background and human presence as key indicators of “trout”

🐺 The Wolf vs. Husky Case

Another famous example comes from a study where a model was trained to distinguish wolves from huskies.

The Problem

The training data had a hidden bias:

- Wolf images often had snow in the background

- Husky images did not

The Result

The model learned to associate snow with wolves. It wasn’t identifying the animals — it was identifying the environment.

When shown a husky in snow, it labeled it a wolf. When shown a wolf in a grassy field, it failed.

🧠 Other Notable Failures

1. Tank Detection in Military AI

A Cold War-era story (possibly apocryphal) describes a model trained to detect tanks. It learned to distinguish sunny vs. cloudy days, not tanks — because all tank photos were taken on sunny days.

2. Pneumonia Detection in Chest X-rays

A model trained to detect pneumonia performed well — until researchers realized it was using hospital labels embedded in the image metadata, not the actual X-ray content.

3. Skin Cancer Detection

An AI model flagged images with rulers (used by doctors to measure lesions) as cancerous — because most cancer images had rulers, and benign ones didn’t.

🔍 Lessons Learned

These failures highlight key principles in machine learning:

1. Context Matters

Models often learn from contextual clues, not the object itself.

2. Data Bias Is Subtle

Even well-labeled datasets can contain hidden biases that mislead models.

3. Generalization Is Hard

AI must be tested on out-of-distribution data to ensure robustness.

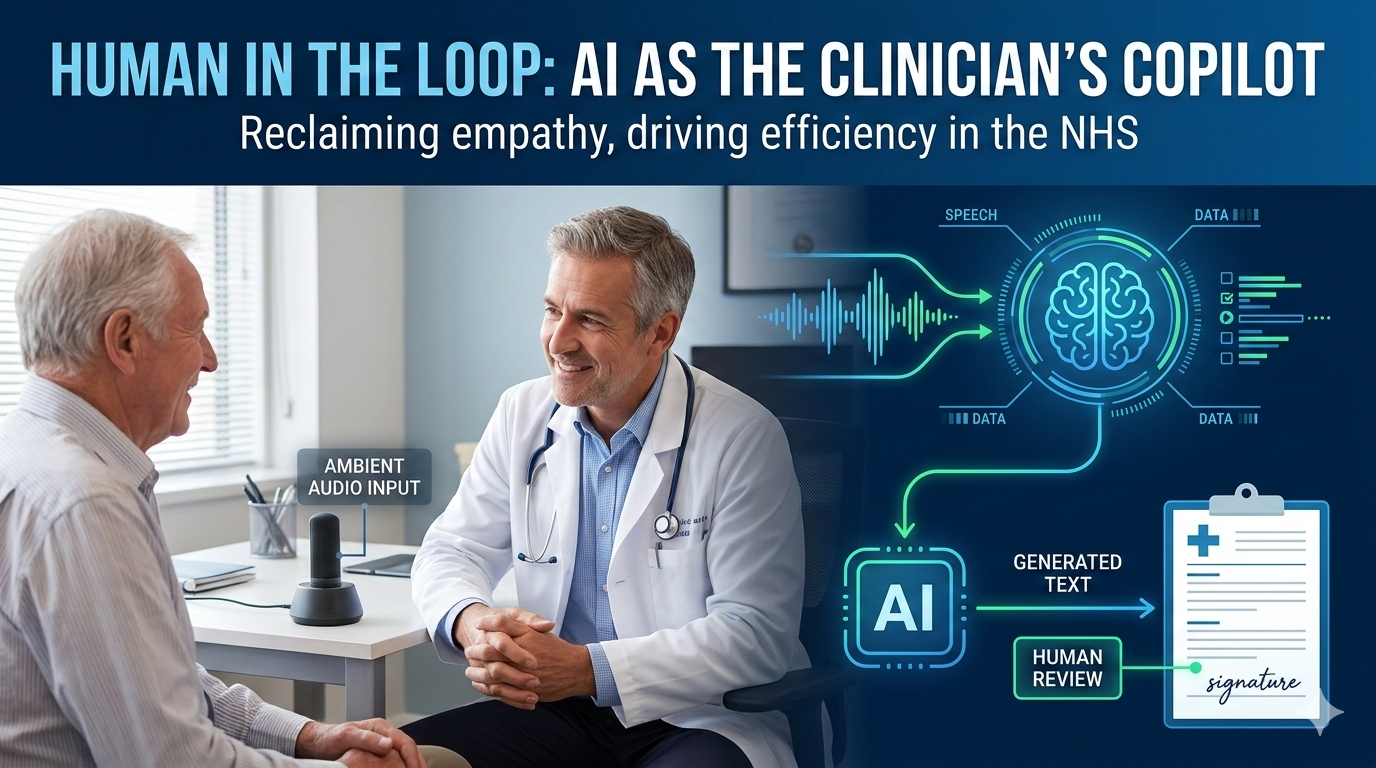

4. Human Oversight Is Crucial

Domain experts are essential to spot misleading patterns and validate model behavior.

🧪 How to Avoid These Pitfalls

- Diversify training data: Include varied backgrounds, lighting, and contexts.

- Use saliency maps: Visualize what the model is focusing on.

- Test on edge cases: Challenge the model with atypical examples.

- Audit datasets: Look for unintended correlations.

- Involve domain experts: They can spot what the model might be missing.

Conclusion

The trout identification failure is more than a funny anecdote — it’s a cautionary tale. Machine learning models are only as good as the data they learn from. When that data contains hidden biases, the results can be misleading, unreliable, or downright wrong.

As AI becomes more embedded in decision-making, understanding these failures is essential. They remind us that intelligence — artificial or not — must be grounded in context, clarity, and careful design.